Workshop on best practices for successful monitoring and evaluation of development projects

What are the challenges in designing projects with successful monitoring and evaluation (M&E) systems? How do you ensure data collection for M&E is reliable? What is a “theory of change,” and how can it shape M&E over the course of a development project’s lifetime?

These are a few of the major questions raised during the workshop on “M&E Systems for Organizational Effectiveness: Why Organizations Should Care and Strategies for Engagement,” held by TCI-TARINA in partnership with the Tata Institute of Social Sciences (TISS) on February 24th, 2017 in New Delhi. The workshop was attended by senior and mid-level managers from various non-profit, non-governmental, and development organizations.

Theory of change

The lead facilitator Dr. Mark Constas, Professor of Applied Economics at Cornell University and international M&E expert, began the workshop by introducing a concept known as the “Theory of Change” (ToC) and its importance in the development of an M&E system. A ToC delineates what outcomes or results the project aims to achieve and the main pathways by which it will achieve them.

Co-facilitator, Dr. Prabhu Pingali, who is the Director of TCI and also a Professor of Applied Economics at Cornell University, shared his experiences developing a ToC for various projects and emphasized the need to design it with the utmost focus and detailing. He indicated that this is particularly critical given that the ToC forms the foundation of the project and becomes the reference point for understanding the successes and/or failures of the project.

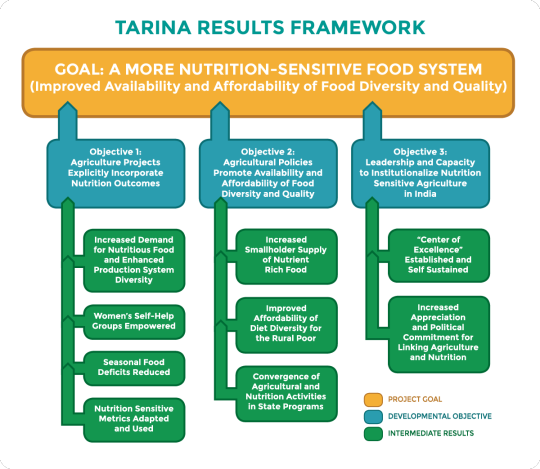

In the case of TCI’s TARINA project, which was established under a grant from the Bill & Melinda Gates Foundation, a Results Framework (pictured below) was devised as the ToC for achieving the project’s overarching goal of creating a more nutrition-sensitive food system in India. Both Prof. Constas and Prof. Pingali recommended setting clear objectives and desired results that meet those objectives, as has been done for TARINA’s Results Framework.

During the discussion, an NGO Director from the audience asked whether the ToC can change over the course of the project. Prof. Constas and Prof. Pingali stated that the ToC might shift, but it is for the organization to decide when they allow it to happen. The ToC should always accurately reflect the cause that the organization is working towards.

Data, data, data

Capturing reliable data was highlighted as a key input for efficient monitoring. One of the participants commented that data collection is “considered the easiest task” when it is in fact difficult and requires careful and thorough thinking. For example, the National Sample Survey (NSS) has been collecting data on dietary diversity by asking respondents to recall what they have consumed in the past 30 days. However, it is now generally accepted that 24-hour or even 3-day recalls are much better for collecting reliable data. Similarly, many surveys in India ask respondents for their age, instead of asking for their birthdate. If there are any errors with respect to the age of children, for instance, it will have a great impact on the survey results as well as the response to difficulties in early childhood development and maternal nutrition. The last and perhaps the most important point was that baseline, midline, and end-line surveys have to include the same sample group and the same indicators to be an effective comparison for assessing the impact of the project and the degree to which results and objectives have been realized. However, this is often very challenging to do.

Defining a set of “minimum metrics” is very important to data collection. Prof. Constas indicated that large household surveys can be very taxing and an extreme burden on participant households. Prof. Constas stressed that “low burden, high frequency” data collection that captures seasonality and volatility over the course of the year are optimal.

Prof. Prabhu Pingali speaking to workshop participants.

One participant inquired about best practices for handling fatigue on the part of the respondents. Prof. Constas responded that “sample replacement” is a very good solution. There are many ways to do it; for example, you can construct panel data, synthetic panel data, or simulated panels. The most recommended solution is to follow a replacement protocol where you either remove certain respondents entirely from the data collection and go to new households, or you give a vacation time period to each respondent so that they do not get repeat visits too frequently. You could also have groups of respondents that alternate years of participation in multi-year surveys.

The facilitators encouraged all workshop participants to think critically about the design of their evaluation surveys. In particular, they emphasized that Randomized Controlled Trials (RCTs) are not the gold standard for all evaluation, and that an RCT should only be used in scenarios where it is relevant and useful. RCTs are limited because they may be able to show an effect, but not be able to explain “what produced the effect.” Other useful tools include regression continuity studies and other quasi-experimental designs.

Ethics and reporting

In addition to M&E, the discussion delved into questions about the ethics of data collection as well as the types of tools for evaluation. Some NGO participants mentioned that they do not receive the credit they seek in publications that involve data from their own projects and also do not receive recognition as reliable evaluators.

Both problems have two very useful solutions. In the case of publications, NGOs can sign an agreement with all research partners to ensure that recognition is given to the contributors, and a co-authorship agreement can be signed. In the case of receiving credit as reliable evaluators, it was mentioned that Internal Review Board (IRB) certifications and participation in national evaluator associations can help to ensure credibility and enhance the role of the NGO in research. The facilitators shared the resource on the course “Responsible Conduct of Research” offered by the Collaborative Institutional Training Initiative (CITI).

Monitoring and evaluation: A casualty

Despite M&E being critical to informing a project, and therefore a key determinant in its success, budgetary allocation to M&E is in many cases very low. Usually, M&E is the first casualty budget restructuring.

Prof. Pingali also shared that M&E practitioners should understand both the project and the context of its implementation. He commented that M&E should be treated like an audit, with internal and external evaluators. In other words, organizations should have their project(s) assessed by evaluators from within the organization and from outside the organization.

Donors and stakeholders – Wake up!

A key point that was put forth by the participants was that the onus of embedding M&E into development projects lies with the donors. They asserted that it is the donors that have to develop a robust M&E structure within the project and ensure that funds are allocated toward M&E. Participants also asserted that there is a larger stakeholder ecosystem that needs to buy into the ToC of the organization as well.

Group photo of workshop participants and facilitators.

As the event came to a close, it became clear that there was strong demand among participants for subsequent workshops on various topics discussed, particularly on the latest technical know-how and innovations in evaluation. The project will be looking into these possibilities to potentially offer future workshops as a resource for organizations interested in advancing data-based solutions for development effectiveness.

By Siddharth Chaturvedi

Siddharth Chaturvedi (sc2857@cornell.edu) is a Senior Program Officer for TCI-TARINA, based in New Delhi, India.